Science & Tech

Robots Could ‘Kill Us Out of Kindness’ If We Don’t Teach Them Human Values

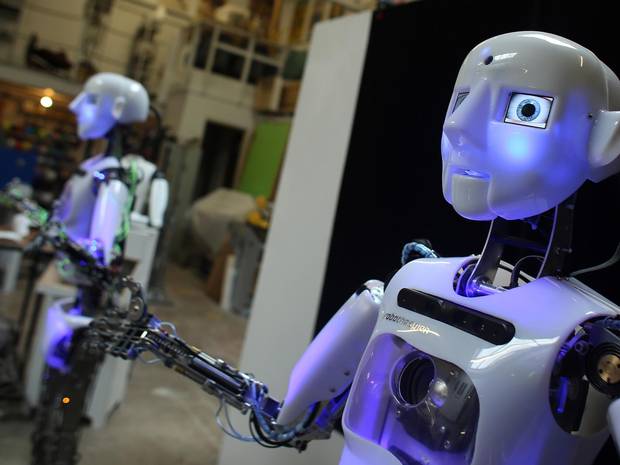

A well-known futurist warns that one day robots could “kill us out of kindness” if we don’t teach them to appreciate human values.

This is what Nell Watson, a leading engineer and futurist, said at The Conference in Malmo, Sweden, speaking of the potential dangers that threaten humanity in case robots become extremely intelligent but lack values.

In her opinion, machines will soon reach the level of cognition of bumblebees, which are aware of the environment and social structure: “Machines are going to be aware of the environments around them and, to a small extent, they’re going to be aware of themselves.”

Ms Watson thinks that advances in robotics will soon dramatically change our society. Not only will we have robot servants at home, but a number of professions, including certain medical care and law jobs, will be undertaken by machines. However, the futurist does not see it as a negative thing, noting that robots could help us better understand ourselves and even persuade us to have healthier and more productive lifestyles.

On the other hand, Ms Watson worries about the other side of progress in artificial intelligence: “I can’t help but look at these trends and imagine how then shall we live? When we start to see super-intelligent artificial intelligences are they going to be friendly or unfriendly?” She emphasizes that teaching robots benevolence is not enough, as they may have different ‘understanding’ of what is good/kind and what is not.

Thus, it is quite probable that one day the intelligent machines could simply decide that destroying the human race would be the right – or even the kindest – thing to do. “The most important work of our lifetime is to ensure that machines are capable of understanding human value,” says Ms Watson. “It is those values that will ensure machines don’t end up killing us out of kindness.”

But is it possible to embed such irrational thing as human value into a machine that operates only by rules? Even if it is, robots could simply find a way to overrule it, since the fact that artificial intelligence relies only on rationality, while human intelligence tends to rely on intuition. And such things as morals and values undoubtedly belong to the category of irrational, purely human concepts.

ABOUT THE AUTHOR

Anna LeMind is the owner and lead editor of the website Learning-mind.com, and a staff writer for The Mind Unleashed.

Featured image: TheIndependent

Typos, corrections and/or news tips? Email us at Contact@TheMindUnleashed.com